TikTok and Meta risked safety to win algorithm arms race, whistleblowers say

BBC/Getty images

BBC/Getty imagesSocial media giants made decisions which allowed more harmful content on people's feeds, after internal research into their algorithms showed how outrage fuelled engagement, whistleblowers told the BBC.

More than a dozen whistleblowers and insiders have laid bare how the companies took risks with safety on issues including violence, sexual blackmail and terrorism as they battled for users' attention.

An engineer at Meta, which owns Facebook and Instagram, described how he had been told by senior management to allow more "borderline" harmful content - which includes misogyny and conspiracy theories - in user's feeds to compete with TikTok.

"They sort of told us that it's because the stock price is down," the engineer said.

A TikTok employee gave the BBC rare access to the company's internal dashboards of user complaints - as well as other evidence of how staff had been instructed to prioritise several cases involving politicians over a series of reports of harmful posts featuring children.

Decisions were being made to "maintain a strong relationship" with political figures to avoid threats of regulation or bans, not because of the risks to users, the TikTok staffer said.

The whistleblowers who spoke to the BBC documentary, Inside the Rage Machine, offer a close-up view of how the industry responded following the explosive growth of TikTok, whose highly engaging algorithm for recommending short videos upended social media, leaving rivals scrambling to catch up.

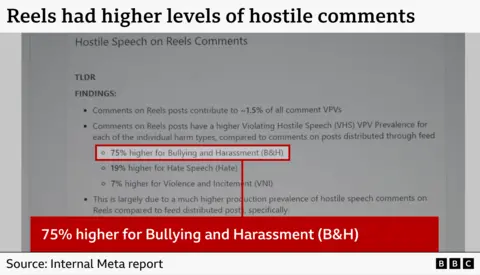

A senior Meta researcher, Matt Motyl, said the company's competitor to TikTok, Instagram Reels, was launched in 2020 without sufficient safeguards. Internal research shared with the BBC showed comments on Reels had significantly higher prevalence of bullying and harassment, hate speech, and violence or incitement than elsewhere on Instagram.

The company invested in 700 staff to grow Reels, while safety teams were refused two specialist staff to deal with protecting children and 10 more to help with the integrity of elections, another former senior Meta employee said.

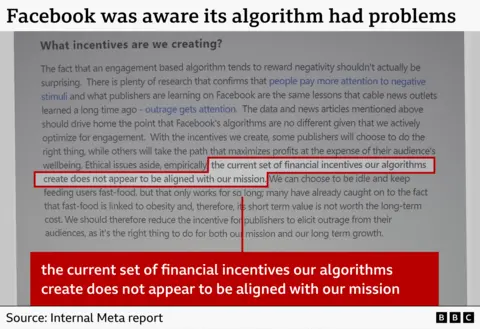

Motyl gave the BBC dozens of what he described as "high-level research documents showing all sorts of harms to users on these platforms". Among them, was evidence that shows Facebook was aware of problems caused by its algorithm.

The algorithm offered content creators a "path that maximizes profits at the expense of their audience's wellbeing" and the "current set of financial incentives our algorithms create does not appear to be aligned with our mission" to bring the world closer together, according to one internal study.

It said Facebook can "choose to be idle and keep feeding users fast-food, but that only works for so long".

In response to the whistleblowers' claims, Meta said: "Any suggestion that we deliberately amplify harmful content for financial gain is wrong." TikTok said these were "fabricated claims" and the company invested in technology that prevented harmful content from ever being viewed.

The algorithms are a "black box" whose internal workings are difficult to scrutinise, said Ruofan Ding, who worked as a machine-learning engineer building TikTok's recommendation engine from 2020 until 2024.

It was hard to build systems like this that were completely safe, he said. "We have no control of the deep-learning algorithm in itself."

The engineers do not pay much attention to the content of the posts, he said. "To us, all the content is just an ID, a different number."

He said they relied on the content safety teams to ensure that harmful posts were removed so they could not be promoted by the algorithm, comparing their relationship to different teams working on parts of a car.

"There's the team that are responsible for the acceleration, the engine, right? So we expect the team working on the braking system was doing a good job," he said.

But Ding said as TikTok tried to improve its algorithm almost on a weekly basis to gain more market share, he started seeing more "borderline" content or problematic posts that only appeared after users had been browsing videos for a while.

Borderline content is a term used within social media companies usually to describe posts which are harmful but legal - including misogynistic, racist and sexualised posts, as well as conspiracy theory content.

The systems for users to indicate they do not want to see problematic content are not working, teenagers told the BBC, and they are still recommended violence and hateful content on major social media sites.

In one extreme case, another teenager, Calum - now 19 - said he had been "radicalised by algorithm" from the age of 14. The algorithm showed him content that outraged him and led to him adopt racist and misogynistic views, he said.

The videos "energised me, but not really in a good way," he said. "They just made me very kind of angry. It very much reflected the way I felt internally, that I was angry at the people around me."

Counter-terror police specialists in the UK who analyse thousands of posts on social media every year say they have seen the "normalisation" of antisemitic, racist, violent and far-right posts in recent months.

"People are more desensitised to real-world violence and they are not afraid to share their views," one officer said.

'Delete TikTok'

Over a period of several months during 2025, the BBC regularly spoke to a member of the trust and safety team at TikTok, who we are calling Nick. We were able to view the company's internal dashboard on his laptop, detailing the cases his specific trust and safety team was dealing with, and how it responded.

"If you're feeling guilty on a daily basis because of what you're instructed to do, at some point you can decide, should I say something? " said Nick.

The volume of cases they were assessing was too difficult to keep on top of to keep users safe, which left teenagers and children especially at risk, he added. Cuts and the reorganisation of some moderation teams - where some roles are being replaced by AI technology - have, in his view, limited the ability to deal effectively with this kind of content within the company.

Material linked to "terrorism, sexual violence, physical violence, abuse, trafficking" appears to be increasing, the whistleblower said.

The reality of what the app recommends, and the action taken against harmful content, is "very different in a lot of aspects to what the sites are saying" in public, he added.

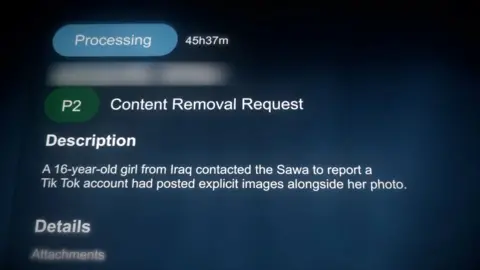

The BBC was shown evidence by Nick of how TikTok rated some relatively trivial cases involving politicians as a higher priority for review by the safety team than several cases involving harm to teenagers.

In one example, a political figure who had been mocked by being compared to a chicken was prioritised over a 17-year-old who reported being the victim of cyber bullying and impersonation in France and a 16-year-old in Iraq who complained that sexualised images purporting to be of her were being shared on the app.

Referring to the Iraq case, Nick said: "If you look at the country where this report comes from, it's very high risk because it's a minor and it involves sexual blackmail and then you can see the priority here. The urgency is not high."

Nick also showed examples of where some posts encouraging people to join terror groups or commit crimes had not been labelled as top priority.

When the trust and safety team had asked to prioritise cases involving young people over political cases, the whistleblower said, they were told not to and to continue to deal with the cases according to the ranking they had been given.

Nick said the reason for the prioritisation was that - in his view - the company ultimately cares less about children's safety than it does about maintaining a "strong relationship" with politicians and governments, to avoid regulations or bans which would harm its business.

Nick said when he and other staff members raised some of these concerns with management, they were not receptive to it because they "are not being exposed to this content on a day-to-day basis".

The trust and safety employee's blunt advice to parents with children using TikTok is: "Delete it, keep them as far away as possible from the app for as long as possible. "

TikTok said it rejected the idea that political content is prioritised over the safety of young people and said the claim "fundamentally misrepresents the way their moderation systems operate".

The team Nick belongs to is part of a wider safety system which has multiple teams responsible for reviewing complaints about content. TikTok said: "Specialist workflows for certain issues do not result in the deprioritisation of child safety cases, which are handled by dedicated teams within parallel review structures."

A TikTok spokesperson said the criticisms "ignore the reality of how TikTok enables millions to discover new interests, find community, and supports a thriving creator economy".

The company said accounts for teens have more than 50 preset safety features and settings which are automatically turned on. It also said it invests in technology that helps prevent harmful content from ever being viewed, maintains strict recommendation policies and provides features for people to tailor their experiences.

'Do whatever we can to catch up'

In 2020, the algorithm arms race intensified when Reels was launched as part of Instagram, in response to TikTok taking the world by storm during the Covid pandemic.

Matt Motyl - who worked as a senior researcher at Facebook and its successor company Meta from 2021 - said this was the company's attempt to "mimic" the "unique product" TikTok had launched.

His job between 2019 and 2023 involved "running large-scale experiments on sometimes as many as hundreds of millions of people" - who often had "no idea" this was happening - testing out how content was ranked in feeds.

"Meta's products are used by north of three billion people and the more time they can keep you on there, the more ads they sell, the more money they make. But it's very important that they get this stuff right, because when they don't, really bad things happen," he said.

When it came to Reels in particular, Motyl said the approach was to move as quickly as possible whatever the impact on users. He said there was a "common trade-off between protecting people from harmful content and engagement".

Meta was struggling to prevent harm on Reels following its launch, according to one research paper he shared with the BBC. It suggests Reels posts had a higher prevalence of harmful comments than posts on the main Instagram feed: 75% higher for bullying and harassment, 19% higher for hate speech, and 7% higher for violence and incitement.

He said there was a "power imbalance" because safety staff had to get the agreement of teams in charge of Reels to introduce a new product or feature that would improve user safety. The Reels staff had "incentives to not let those products launch because toxic stuff gets more engagement than non-toxic," Motyl said.

Brandon Silverman, whose social media monitoring tool Crowdtangle was bought by Facebook in 2016, was party to some senior-level discussions during this time and described how CEO Mark Zuckerbeg was "very paranoid" about competition.

"When he feels like there are potential competitive forces there's no amount of money that is too much," Silverman said.

He said during this period he saw safety teams struggling to get approval to recruit small numbers of staff while the company's focus was on expanding Reels. "There was another team that went, oh, we just got 700 for Instagram Reels. I was like, OK yeah," he said.

A former engineer at Meta, who we are calling Tim, said that, as the company sought to compete with TikTok, more borderline harmful content was allowed on the site. His team had been focused on reducing this content, until the "business positioning" changed.

"You're losing to TikTok and therefore your stock price must suffer. People started becoming paranoid and reactive and they were like, let's just do whatever we can to catch up. Where can we get like 2%, 3% revenue for the next quarter?" Tim said.

He said that decision to stop limiting content that was possibly harmful but not illegal - and that users were engaging with - was made by a senior vice-president of Meta who Tim believed reported directly to Mark Zuckerberg.

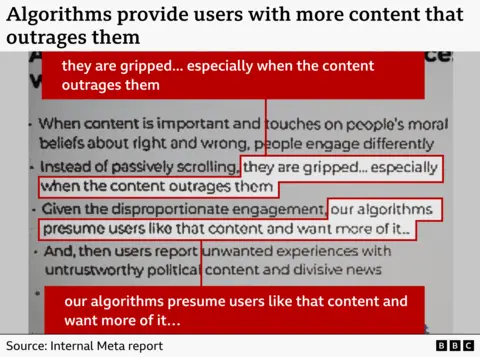

Around the time when Facebook was arguing that it was just a "mirror to society", internal documents shared with the BBC by Motyl, the senior researcher, reveal how the company knew it was amplifying content which angered people and even incited harm.

The documents outline how sensitive content - which can include material that touches on people's moral beliefs or posts that incite violence - is more likely to trigger a reaction and engagement on the site, especially if it causes outrage.

"Given the disproportionate engagement, our algorithms presume that users like that content and want more of it," the study says.

Silverman said Meta's leadership seemed initially unsure how to respond to toxic content on the platform and there was a period of time when the company was "genuinely introspective".

But he said their stance "began to calcify into a sort of defensiveness". Their attitude was that "we're not responsible for all of polarisation in society", he said.

"Nobody's saying you're responsible for all polarisation. We're just saying you contribute to it, and probably in ways where you don't have to. If you just made a few changes, you might not contribute to it as much," Silverman said.

A Meta spokesperson denied the whistleblowers' claims. "The truth is, we have strict policies to protect users on our platforms and have made significant investments in safety and security over the last decade," the spokesperson said.

The company said it had "made real changes to protect teens online" including introducing a new Teen Accounts feature "with built-in protections and tools for parents to manage their teens' experiences".

Additional reporting by Robert Farquhar, Daisy Bata and Ez Roberts

Sign up for our Tech Decoded newsletter to follow the world's top tech stories and trends. Outside the UK? Sign up here.